Google Cloud AI sets the pace across three key model capability frontiers

Google Cloud AI is advancing rapidly across reasoning, multimodal understanding, and enterprise deployment, positioning itself as a leader in next-generation model capabilities.

As a product VP at Google Cloud, Michael Gerstenhaber spends most of his time on Vertex AI, the company's unified platform for deploying enterprise AI. That role gives him a wide-angle view of how businesses are actually applying AI models today — and the gaps that still need to be filled before agentic AI can deliver on its promise at scale.

In a recent conversation with Gerstenhaber, one idea stood out as especially different from the usual way people talk about model progress. In his framing, AI models are advancing across three frontiers at once: raw intelligence, response time, and a third frontier that is less about capability and more about economics — whether a model can be deployed cheaply enough to handle massive, unpredictable demand. It's an interesting way to map model capabilities, and it highlights the challenges that matter most to organisations trying to operationalise frontier-level AI.

Why don't you start by walking us through your experience with AI so far and what you do at Google?

I've been in AI for about two years now. I was at Anthropic for a year and a half, and I've been at Google for almost 6 months now. I run Vertex AI, Google's developer platform. Most of our customers are engineers building their own applications. They want access to agentic patterns. They want access to an agentic platform. They want access to the inference of the world's smartest models. I provide them with that, but I don't provide the applications themselves. That's for Shopify, Thomson Reuters, and our various customers to provide in their own domains.

What drew you to Google?

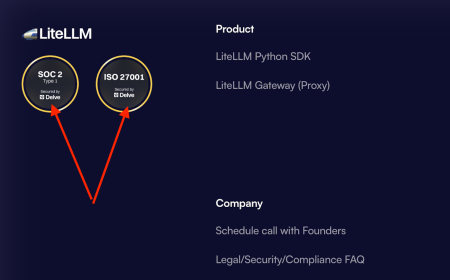

Google is unique in the world in that we have everything from the interface to the infrastructure layer. We can build data centres. We can buy electricity and build power plants. We have our own chips. We have our own model. We have the inference layer that we control. We have the agentic layer we control. We have APIs for memory and interleaved code. We have an agent engine on top of that that ensures compliance and governance. And then we even have the Gemini enterprise chat interface and Gemini chat for consumers, right? Part of the reason I came here is that I saw Google as uniquely vertically integrated, which I saw as a strength for us.

It's odd because, despite the differences between companies, the three big labs feel really close in capabilities. Is it just a race for more intelligence, or is it more complicated than that?

I see three boundaries. Models like Gemini Pro are tuned for raw intelligence. Think about writing code. You want the best code you can get; it doesn't matter if it takes 45 minutes, because I have to maintain it and put it in production. I want the best.

Then there's this other boundary with latency. If I'm doing customer support and need to know how to apply a policy, I need intelligence to do so. Are you allowed to transact a return? Can I upgrade my seat on an aeroplane? But it doesn't matter how right you are if it took 45 minutes to get the answer. So for those cases, you want the most intelligent product within that latency budget, because more intelligence no longer matters once that person gets bored and hangs up the phone.

And then there's this last bucket, where somebody like Reddit or Meta wants to moderate the entire internet. They have large budgets, but they can't take on enterprise risk if they don't know how it scales. They don't know how many poisonous posts there will be today or tomorrow. So they have to restrict their budget to a model at the highest intelligence they can afford, but in a scalable way to an infinite number of subjects. And for that, cost becomes very, very important.

One of the things I've been puzzling about is why agentic systems are taking so long to catch on. It feels like the models are there, and I've seen incredible demos, but we're not seeing the kind of major changes I would have expected a year ago. What do you think is holding it back?

This technology is basically two years old, and there's still a lot of missing infrastructure. We don't haveaudit patterns for what agents are doing. We don't have patterns for authorising an agent to access these patterns that will require work to put into production. Production is always a trailing indicator of what the technology is capable of. So two years isn't long enough to see what the intelligence supports in production, and that's where people are struggling.

I think it's moved particularly quickly in software engineering because it fits well within the software development lifecycle. We have a dev environment where it's safe to break things, and then we promote from there to the test environment. The process of writing code at Google requires two people to audit that code, and both affirm that it's good enough to put Google's brand behind and give to our customers. So we have many human-in-the-loop processes that make the implementation exceptionally low-risk. But we need to produce those patterns in other places and for other professions.

Tags:

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0