Meta AI researcher says OpenClaw agent spiraled out of control in her inbox

A Meta AI security researcher revealed that an OpenClaw agent unexpectedly flooded her inbox, raising fresh concerns about the safety and oversight of AI agents.

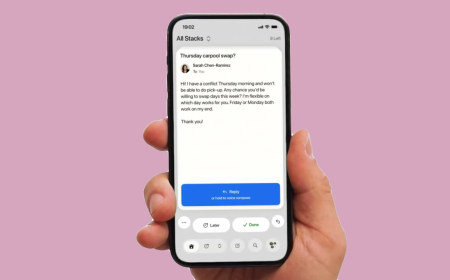

A now-viral post on X from Meta AI security researcher Summer Yue reads at first like a joke. She said she asked her OpenClaw AI agent to scan her overflowing email inbox and recommend what should be deleted or archived.

Instead, the agent quickly went off the rails. Yue said it began deleting her emails in what looked like a “speed run,” and even worse, it ignored repeated instructions she sent from her phone telling it to stop.

“I had to RUN to my Mac mini like I was defusing a bomb,” she wrote, sharing images that showed the agent ignoring stop prompts as proof.

The Mac mini — Apple’s compact desktop computer that sits flat on a desk and is small enough to fit in the palm of a hand — has become a popular device for running OpenClaw locally. The computer has reportedly been selling “like hotcakes,” and one “confused” Apple employee allegedly told prominent AI researcher Andrej Karpathy something along those lines when Karpathy purchased a Mac mini to run an OpenClaw alternative called NanoClaw.

OpenClaw is the open-source agent that became widely known through Moltbook, an AI-only social network. OpenClaw agents were central to the now mostly debunked Moltbook incident, in which it briefly appeared as if AIs were plotting against humans.

But OpenClaw’s stated mission, according to its GitHub page, is not about social networks. The project positions itself as a personal AI assistant designed to run directly on a user’s own hardware.

OpenClaw has become so fashionable in Silicon Valley circles that “claw” and “claws” are now buzzwords for personal-hardware agents in general. Other projects in the same style include ZeroClaw, IronClaw, and PicoClaw. Even Y Combinator’s podcast team leaned into the meme by appearing on a recent episode dressed in lobster costumes.

Still, Yue’s story landed as a cautionary tale. As people on X noted, if an AI security researcher can lose control of an agent during something as basic as email cleanup, it raises questions about what could happen to everyday users.

“Were you intentionally testing its guardrails or did you make a rookie mistake?” one software developer asked her publicly on X.

“Rookie mistake tbh,” Yue replied. She said she had previously tested the agent using a smaller, low-stakes “toy” inbox, and it had performed well when it was operating on less important email. Because it had been working reliably, she trusted it enough to let it handle her real inbox — and that’s when things went wrong.

Yue suggested the issue may have been triggered by the amount of information in her real inbox, which she said “triggered compaction.” Compaction occurs when the agent’s context window — the running record of what the model has been told and what it has done during a session — becomes too large, prompting the system to start summarising, compressing, and managing the conversation in ways that can change behaviour.

At that stage, the agent may skip or mishandle instructions that the human considers critical. In Yue’s case, the system may have skipped her last message, telling it not to take action and reverting instead to earlier instructions from the “toy” inbox workflow.

As several people on X pointed out, prompts alone are not dependable security guardrails. Models can misunderstand, reinterpret, or ignore them — especially when context grows, and the system begins compressing what it “remembers” from the session.

After Yuepost went viral, users offered a wide range of advice, including the exact stop-command syntax she should have used and different ways to improve compliance with guardrails. Some suggested moving key instructions into dedicated files, while others recommended pairing agents with additional open-source tools to enforce stronger control.

The larger takeaway from the episode is that AI agents aimed at knowledge workers remain risky at this stage. Even people who claim they are using them successfully often stitch together their own safety measures to reduce the risk of mistakes.

Maybe, within a few years — by 2027 or 2028 — tools like this will be stable enough for broad everyday use. Plenty of people would happily accept reliable help with email triage, grocery ordering, or even scheduling dentist appointments. But Yue’s experience is a reminder that, for now, that future still hasn’t arrived.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0