OpenAI introduces open-source tools to support teen safety in app development

OpenAI launches open-source tools to help developers build safer digital experiences for teens, with a focus on privacy, content moderation, and age-appropriate AI use.

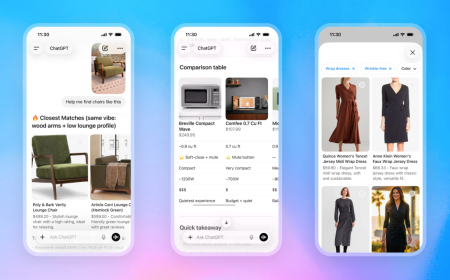

OpenAI announced on Tuesday that it is rolling out a new set of prompts designed to help developers build safer applications for teenagers. The company said these teen safety policies are intended to be used alongside its open-weight safety model, gpt-oss-safeguard.

Instead of requiring developers to design safety systems from the ground up, the new prompts provide a ready-made framework that can be integrated into applications. These policies cover a range of sensitive areas, including graphic violence, sexual content, harmful body image standards, risky activities and viral challenges, romantic or violent roleplay scenarios, as well as access to age-restricted products and services.

The safety guidelines are structured as prompts, allowing them to be used not only with OpenAI’s own models but also adapted for compatibility with other AI systems. However, the company noted that they are likely to perform best within its own ecosystem.

To develop these policies, OpenAI collaborated with organisations focused on child safety and digital well-being, including Common Sense Media and everyone.ai.

“These prompt-based policies help set a meaningful safety floor across the ecosystem, and because they’re released as open source, they can be adapted and improved over time,” said Robbie Torney, head of AI & Digital Assessments at Common Sense Media, in a statement.

OpenAI acknowledged that developers, even those with significant experience, often face challenges in converting broad safety objectives into clear, enforceable rules. According to the company, this difficulty can result in inconsistent safeguards, gaps in protection, or overly aggressive filtering that affects usability.

“Clear, well-scoped policies are a critical foundation for effective safety systems,” OpenAI said in its blog post, emphasising the need for practical implementation tools rather than abstract guidelines.

The company also made it clear that these new policies are not a complete solution to the broader challenges of AI safety. Instead, they are part of an ongoing effort to improve protections for younger users. Previous steps have included features such as parental controls, age detection systems, and updates to its Model Spec. This framework defines how its AI systems should behave, including interactions with users under 18.

Despite these efforts, OpenAI’s approach to safety has faced scrutiny. The company is currently dealing with multiple lawsuits filed by families who allege that excessive use of ChatGPT contributed to suicides. These cases highlight the difficulty of fully preventing harmful interactions, especially when users push beyond existing safeguards. No AI system, the company admits, can offer completely foolproof protection.

Even so, the release of these open-source safety prompts represents a step forward, particularly for independent developers who may lack the resources to build comprehensive safety systems on their own.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0