Startup bets on tokenmaxxing to build the next compute powerhouse

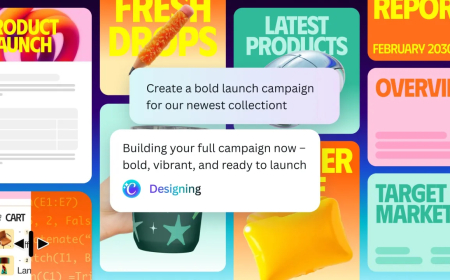

A new startup is betting on tokenising to build a massive compute ecosystem, aiming to reshape how AI resources are distributed and utilised globally.

“Give me tokens. Just give me tokens. I want them fast. I want them cheaply. I want them now.”

That’s the kind of demand developers are voicing as they build software on generative AI systems — or at least how Parasail CEO Mike Henry describes it. Parasail delivers cloud computing services tailored for companies running AI models for inference, and Henry said the platform is already processing around 500 billion tokens each day, underscoring the scale of what he calls “tokenmaxxing.”

Henry previously worked as an executive at Groq, where he was responsible for building its cloud platform. That experience gave him an early sense that developers working with AI models would increasingly require cloud infrastructure specifically designed for their workloads. After operating quietly for a year, Parasail has now secured $32 million in a Series A funding round to expand that vision.

Although Henry’s expertise lies in hardware and chip design, Parasail is not focused on producing its own processors. While the company does operate some GPUs internally, most of its computing capacity is sourced externally. Parasail rents processing power from around 40 data centres spread across 15 countries and supplements that supply through liquidity markets, managing everything behind the scenes to reduce the cost of inference operations.

By distributing workloads efficiently and avoiding demand spikes, the company is attempting to compete with providers that rely heavily on their own chips, which may already be tied up with existing customers and long-term commitments.

Parasail’s growth strategy depends on the continued rise of open source AI models and autonomous agents beyond major AI labs. According to the company’s leadership and investors, this trend is being driven by the increasing cost and complexity of using services from companies like Anthropic and OpenAI.

A hybrid approach is starting to take shape, said Andreas Stuhlmüller, CEO of Elicit, which recently raised $22 million in a Series A round. Elicit builds AI-powered tools for analysing scientific research, with customers including major pharmaceutical firms that rely on its system to process data from tens of thousands of academic papers.

“We’ve shifted more toward open models because sending hundreds of thousands of requests to a single API endpoint can be difficult,” Stuhlmüller explained, noting that his company increasingly uses agents to divide tasks and operate over longer timeframes. In this setup, open models handle early-stage analysis to keep costs down, while more advanced models are used later to deliver refined outputs.

As AI agents become more deeply integrated into software development, the volume of model queries is rising sharply. That surge is fueling interest in companies like Parasail, which aim to provide lower-cost infrastructure for inference workloads. Samir Kumar, a partner at Touring Capital and co-leader of the funding round, said he expects inference to account for at least 20% of software development costs in the future.

The question now is how much of that expanding market Parasail can capture. In an already crowded cloud computing sector, Henry believes the company’s focus on inference — rather than training — along with its willingness to work with early-stage startups without requiring long-term contracts, gives it an edge over larger providers focused on enterprise clients, as well as better-funded competitors such as Fireworks AI and Baseten.

Still, relying heavily on startups as customers introduces its own uncertainties, particularly in a rapidly evolving AI landscape.

Steve Jang, a partner at Kindred Ventures, which also co-led the round, said the economics of deploying AI models will increasingly favour services like Parasail’s compute brokerage model. He added that this demand is likely to grow even further as AI expands into areas such as content generation and robotics.

“People used to think there was an AI bubble,” Jang said. “There isn’t. Demand for inference is growing much faster than supply.”

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0