Bluesky issues its first transparency report, noting rise in user reports and legal demands

Bluesky has released its first transparency report, detailing increased user moderation reports, more legal requests, and enforcement actions as the platform continues to grow rapidly.

Bluesky has published its first comprehensive transparency report, revealing a sharp increase in user moderation reports and a significant rise in legal requests, alongside detailed data on content enforcement and platform growth.

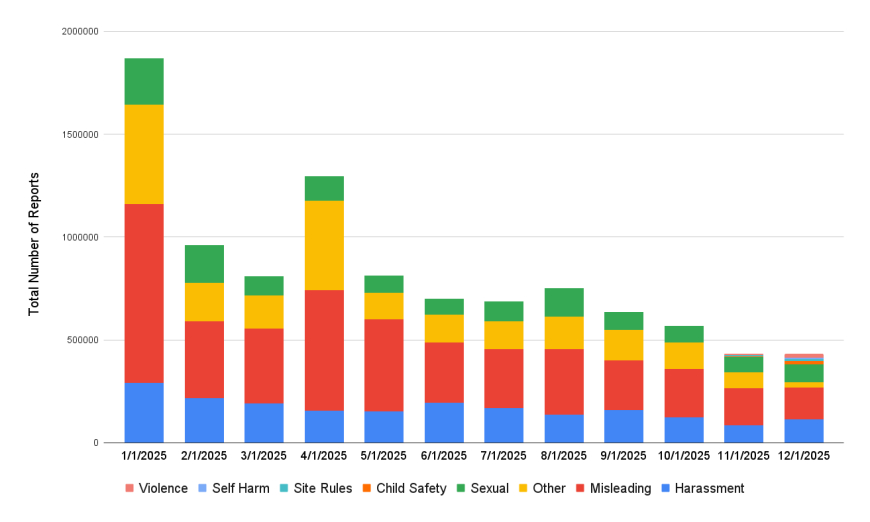

According to the report, roughly 3% of Bluesky’s total user base — about 1.24 million users — submitted moderation reports during 2025. The largest share of reports fell under the “misleading” category, which accounted for 43.73% of all reports. Reports related to harassment made up 19.93%, while sexual content represented 13.54%.

A broad “other” category accounted for 22.14% of reports that did not clearly fit into major classifications such as violence, child safety, rule violations, or self-harm, each of which made up far smaller portions of the total.

Within the misleading content category, which totalled 4.36 million reports, spam alone accounted for approximately 2.49 million submissions.

Harassment-related reports totalled 1.99 million. Hate speech accounted for the largest share of those reports, with about 55,400 submissions. Other reported behaviours included targeted harassment (approximately 42,520 reports), trolling (around 29,500 reports), and doxxing (roughly 3,170 reports). Bluesky noted, however, that the majority of harassment reports fell into a grey area of antisocial behaviour — conduct that may be rude or disruptive but does not clearly meet the criteria for hate speech or other defined violations.

Reports related to sexual content totalled 1.52 million, with the vast majority involving mislabeling. Bluesky explained that these cases typically involved adult material that was not properly tagged with metadata, which users rely on to manage their personal moderation preferences. Smaller numbers of reports addressed nonconsensual intimate imagery (about 7,520), abusive content (approximately 6,120), and deepfake material (just over 2,000).

Violence-related reports numbered 24,670 in total. These were further categorised into threats or incitement (around 10,170 reports), glorification of violence (6,630 reports), and extremist content (about 3,230 reports).

Beyond user-generated reports, Bluesky’s automated systems flagged an additional 2.54 million potential violations during the year.

One area the company highlighted as a success was a sharp reduction in daily reports of antisocial behaviour. After Bluesky introduced a system that identifies potentially toxic replies and hides them behind an extra click — a mechanism similar to one used by X — reports of this type dropped by 79%. The platform also saw a steady decline in reports over time, with reports per 1,000 monthly active users falling 50.9% from January through December.

Image Credits: Bluesky

The transparency report outlines the work of Bluesky’s Trust & Safety team, along with updates on age-assurance compliance, detection of influence operations, automated labelling, and other safety initiatives. The report arrives as the company continues rapid expansion. In 2025, Bluesky’s user base grew by nearly 60%, from 25.9 million to 41.2 million. That figure includes users hosted on Bluesky’s own infrastructure and those operating their own servers within the decentralised network built on the AT Protocol.

Over the past year, users published 1.41 billion posts on Bluesky, accounting for 61% of all posts ever made on the platform. Of those, 235 million posts contained media, representing 62% of all media posts shared since Bluesky’s launch.

The company also disclosed a fivefold increase in legal requests in 2025. Requests from law enforcement agencies, government regulators, and legal representatives totalled 1,470, up from 238 the previous year.

Image Credits: Bluesky

Although Bluesky had previously released moderation-focused updates in 2023 and 2024, this marks the first time it has produced a full transparency report covering moderation, regulatory compliance, account verification, and related areas in a single document.

Moderation reports rise sharply.

User moderation reports increased substantially in 2025. After seeing a 17-fold jump in reports in 2024, Bluesky recorded a further 54% increase this year, rising from 6.48 million reports to 9.97 million. The company noted that this increase closely mirrored its 57% growth in users over the same period.

Outside of moderation, Bluesky reported removing 3,619 accounts suspected of involvement in influence operations, most of which were believed to be linked to Russia.

Takedowns and legal actions increase

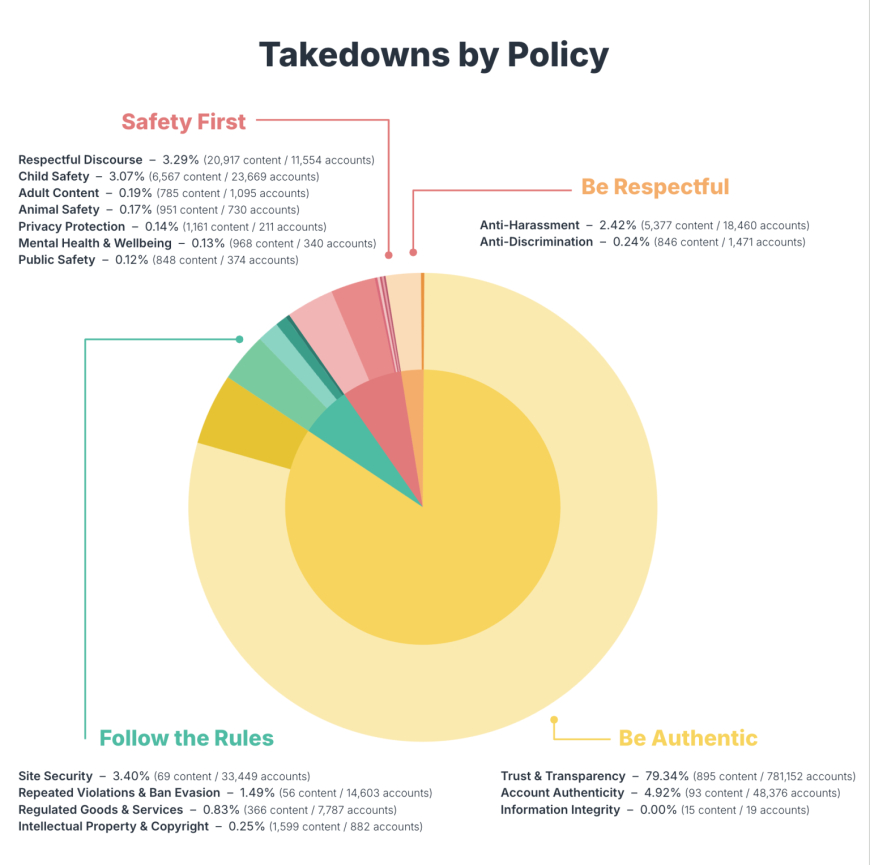

Bluesky said it became more aggressive in enforcing its rules last fall, and the latest data reflects that shift. In 2025, the company removed 2.44 million items, including accounts and individual pieces of content. By comparison, the previous year saw 66,308 accounts taken down manually, with automated systems removing an additional 35,842 accounts.

Moderators also manually removed 6,334 records, while automated tools removed 282. During the year, Bluesky issued 3,192 temporary suspensions and permanently removed 14,659 accounts for ban evasion. Most permanent bans targeted accounts involved in inauthentic behaviour, coordinated spam networks, or impersonation.

Despite the increase in removals, the report suggests that Bluesky favours labelling content over outright bans whenever possible. In 2025, the platform applied 16.49 million labels to posts, a 200% year-over-year increase. During the same period, account takedowns rose 104%, from 1.02 million to 2.08 million. The majority of content labels were applied to adult or suggestive material, including nudity.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0