AI-powered galaxy research is worsening the global GPU shortage

AI-driven galaxy research is increasing demand for GPUs, intensifying the global chip shortage and impacting tech industries and scientific computing.

NASA has announced that it will launch the Nancy Grace Roman Space Telescope into orbit in September 2026, eight months ahead of its original schedule. The mission is expected to generate around 20,000 terabytes of astronomical data over its operational lifetime.

That massive data flow will add to an already expanding stream of space-based observations, including 57 gigabytes of high-resolution imagery transmitted daily from the James Webb Space Telescope, which began operations in 2021. It will also coincide with data collection from the Vera C. Rubin Observatory in Chile, which is set to begin later this year and is expected to produce around 20 terabytes of data every night.

For comparison, the Hubble Space Telescope, once considered the gold standard of space observation, produces only about 1-2 gigabytes of sensor data per day. While raw data from space missions is no longer processed manually as it once was, astronomers are increasingly relying on GPUs to handle the scale and complexity of modern analysis.

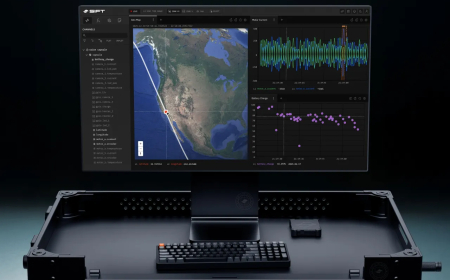

Brant Robertson, an astrophysicist at UC Santa Cruz, has been closely involved in this shift while working with data from multiple space missions. Over the past 15 years, he has collaborated with Nvidia on applying GPU computing to astrophysics, initially for large-scale simulations of phenomena like supernova explosions and now for processing vast observational datasets.

“There’s been this evolution [from] looking at a few objects, to doing CPU-based analyses on large scales of the dataset, to then doing GPU-accelerated versions of those same analyses,” he said

Robertson and Foaduate student Ryan Hausen developed a deep learning system called Morpheus, designed to scan large datasets and automatically identify galaxies. Early analyses of data from the James Webb Space Telescope revealed unexpected numbers of certain disk galaxies, prompting new questions about galaxy formation and evolution.

Now, Morpheus itself is evolving. Robertson is transitioning its architecture from convolutional neural networks to transformer-based models, the same type of architecture that powers large language models. This change is expected to significantly expand the amount of space it can analyse, thereby speeding up research output.

He is also working on generative AI systems trained on space telescope data to enhance observations made by ground-based telescopes. These systems aim to correct distortions caused by Earth’s atmosphere, improving image quality for observatories like the Vera C. Rubin Observatory. Despite advances in space launch capabilities, placing large telescopes with eight-meter mirrors into orbit remains extremely difficult and expensive, making software-based enhancement a critical alternative.

However, Robertson says access to GPU resources is becoming increasingly constrained. He has relied on support from the National Science Foundation (NSF) to build GPU computing clusters at UC Santa Cruz. Still, he notes that these systems are already becoming outdated as demand accelerates across the scientific community. At the same time, proposed budget cuts could further restrict access to computing infrastructure.

“People want to do these AI, ML analyses, and GPUs are really the way to do that,” Robertson said. “You have to be entrepreneurial…especially when you’re working kind of at the edge of where the technology is. Universities are very risk-averse because they just have constrained resources, so you have to go out and show them that, ‘look, this is where we’re going as a field’.”

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0