X Open Sources Its Algorithm as It Faces Transparency Fine and Grok Controversies

X has open-sourced its recommendation algorithm amid an EU transparency fine and growing scrutiny over its Grok AI chatbot.

In 2023, the social media platform then known as Twitter made a partial move toward openness by releasing portions of its recommendation algorithm to the public for the first time. At the time, Tesla CEO Elon Musk had only recently completed his takeover of the company and said he wanted to overhaul the platform with a strong emphasis on transparency.

That initial release, however, was quickly criticized. Observers described it as “transparency theater,” arguing that the shared code was incomplete and offered little real insight into how the platform actually operated or why its systems functioned the way they did.

Now, the platform—rebranded as X—has once again open-sourced its algorithm, following through on a commitment Musk made last week. “We will make the new 𝕏 algorithm, including all code used to determine what organic and advertising posts are recommended to users, open source in 7 days,” Musk wrote at the time. He also said the company would continue providing algorithm updates every four weeks going forward.

We will make the new 𝕏 algorithm, including all code used to determine what organic and advertising posts are recommended to users, open source in 7 days.

This will be repeated every 4 weeks, with comprehensive developer notes, to help you understand what changed. — Elon Musk (@elonmusk) January 10, 2026

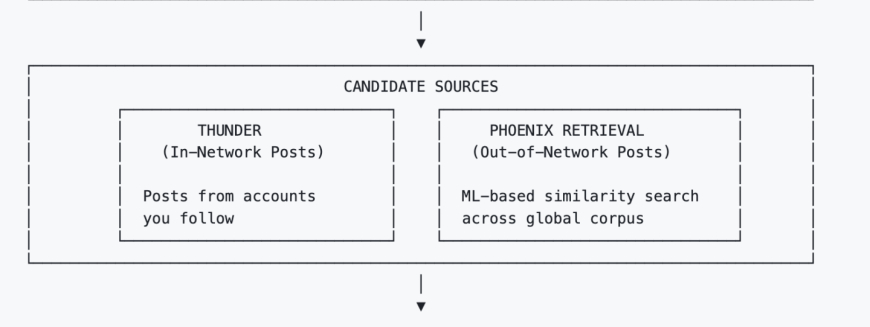

On Tuesday, X published a detailed post on GitHub, offering a plain-language explanation of how its feed-ranking system works, along with a visual diagram outlining the process. While the disclosures don’t radically change public understanding of the platform, they do shed more light on how content is selected and prioritized.

According to the diagram, when the system assembles a feed for a user, it reviews that person’s past engagement—such as posts they have clicked on or interacted with—and scans recent posts from accounts within their network. It also applies machine-learning analysis to “out-of-network” content, meaning posts from accounts the user doesn’t follow but that the system believes may still be of interest.

Image Credits: Screenshot

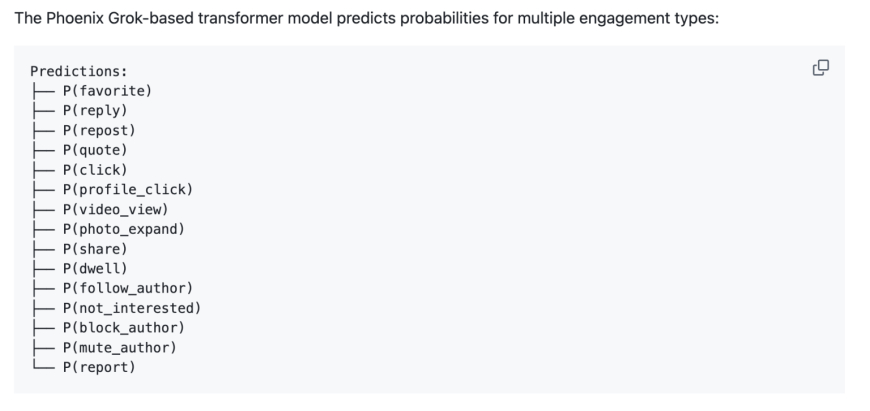

From there, the algorithm removes certain types of content. This includes posts from blocked accounts, content tied to muted keywords, and material flagged as overly violent or spam-like. The remaining posts are then ranked based on predicted appeal. Factors such as relevance and diversity are taken into account so users aren’t shown repetitive or overly similar content. The system also weighs the likelihood that a user will like, reply to, repost, favourite, or otherwise engage with each post.

Image Credits: Screenshot

X says the entire process is driven by artificial intelligence. The GitHub documentation explains that the recommendation engine “relies entirely” on the company’s Grok-based transformer model to determine relevance based on user engagement patterns. In practice, Google Analytics tracks what users click and interact with, feeding that data back into the ranking system. The company also notes that no manual feature engineering is involved in determining relevance, meaning human operators are not directly tuning those signals. According to X, this automation simplifies both its data pipelines and serving infrastructure.

Why X chose to release this information now remains unclear. Musk has repeatedly said he wants the platform to serve as a model of corporate transparency, a message he has continued to emphasize since acquiring the company. When Twitter first published portions of its algorithm in 2023, Musk said that “code transparency” would be “incredibly embarrassing at first” but would ultimately result in better recommendations. “Most importantly, we hope to earn your trust,” he said at the time, describing the move as the start of a “new era of transparency.”

Our “algorithm” is overly complex & not fully understood internally. People will discover many silly things , but we’ll patch issues as soon as they’re found!

We’re developing a simplified approach to serve more compelling tweets, but it’s still a work in progress. That’ll also… — Elon Musk (@elonmusk) March 17, 2023

Despite that rhetoric, some aspects of the platform have arguably become less transparent under Musk’s ownership. After he purchased Twitter in 2022, the company transitioned from a public to a private firm—an environment that typically entails fewer disclosure requirements. While Twitter previously released several transparency reports each year, X did not publish its first such report until September 2024.

Regulators have also raised concerns. In December, the European Union fined X $140 million for alleged violations of transparency requirements under the Digital Services Act. Regulators argued that the platform’s verification check mark system made it harder for users to assess the authenticity of accounts.

More recently, X has faced scrutiny related to how Grok has been used. Over the past month, the platform has come under pressure from the California Attorney General’s Office and U.S. lawmakers, following allegations that the chatbot was used to generate and spread sexualized images, including content involving women and minors. Against that backdrop, some critics see the renewed push for algorithmic openness as another performative gesture rather than a meaningful shift toward accountability.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0