Bluesky announces moderation changes focused on better tracking, improved transparency

Bluesky introduces expanded reporting tools, a new strike system, and improved transparency to strengthen moderation as the platform rapidly grows.

Decentralised social network Bluesky, a rising alternative to X and Threads, announced on Wednesday that it is rolling out additional updates to its moderation systems. The company says the changes focus on improving how it tracks Community Guideline violations, enforcing policies more consistently, and providing more precise feedback to users who break the rules. Updates include new reporting categories, adjustments to its strike system, and more detailed guidance during enforcement actions.

The moderation improvements are available in the latest Bluesky app update (v1.110), which also introduces a dark-mode app icon and a redesigned feature for controlling who can reply to your posts.

Bluesky says the changes stem from its rapid growth and the need for “clear standards and expectations for how people treat each other” on the platform.

“On Bluesky, people are meeting and falling in love, being discovered as artists, and having debates on niche topics in cozy corners,” the company wrote in its announcement. “At the same time, some of us have developed a habit of saying things behind screens that we’d never say in person.”

The update also arrives shortly after a high-profile moderation controversy involving author Sarah Kendzior, who was suspended for writing that she wanted to “shoot the author of this article just to watch him die” — a quote referencing a Johnny Cash lyric. Bluesky moderators interpreted the comment literally as a threat of violence.

With its new rules and systems, Bluesky appears focused on maintaining a healthier community — and avoiding the levels of toxicity now associated with X, where dunk culture, hostility, and harassment have become commonplace.

Image Credits: Bluesky

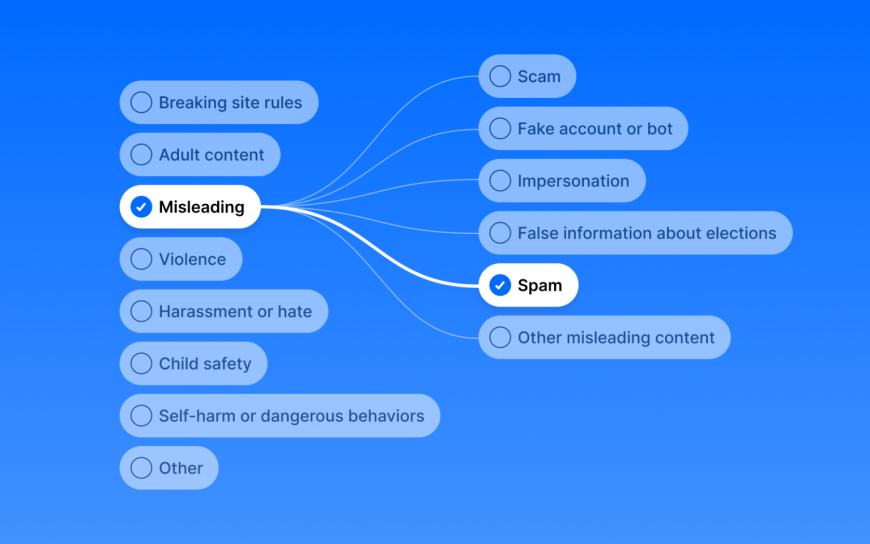

One significant change expands reporting options from six to nine categories, allowing users to identify issues more precisely. New reporting categories include Youth Harassment or Bullying, Eating Disorders, and Human Trafficking, helping Bluesky comply with emerging online safety regulations, including the U.K.’s Online Safety Act.

To support these new tools, Bluesky says it has improved its internal systems to track violations and enforcement decisions in one place automatically. The company emphasises that it is not changing what it enforces — only improving consistency and transparency.

Under the updated strike system, violations will now be assigned a severity rating, which determines the corresponding enforcement action. “Critical risk” content can lead to a permanent ban, while lower-severity violations receive proportionate penalties. Repeated violations can escalate punishments, potentially leading to permanent account removal rather than temporary suspensions.

Users will now receive detailed notifications when enforcement actions occur, including:

• Which guideline was violated

• Severity level assigned

• Total violation count

• How close they are to the next penalty tier

• Duration and end date of any suspension

Enforcement decisions will also be appealable.

These updates follow Bluesky’s revised Community Guidelines released in October, part of a broader push to strengthen moderation and enforcement.

Still, some users remain frustrated that Bluesky continues to allow certain controversial accounts to stay active — particularly one writer widely criticised for his posts about trans issues. The debate resurfaced in October after CEO Jay Graber dismissed user concerns in a series of posts.

Bluesky is navigating a complex identity crisis: the platform doesn’t want to be seen as a left-leaning version of Twitter, but many of its early users joined after feeling alienated by Elon Musk’s direction for X. The company wants to support a diverse range of communities while avoiding the pitfalls of centralised platforms.

At the same time, Bluesky must comply with an increasing number of global safety regulations. Earlier this year, the company blocked access in Mississippi, saying it couldn’t abide by the state’s age-verification law, which carries fines of up to $10,000 per noncompliant user.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0