Microsoft Unveils Maia 200 Chip Designed to Scale AI Inference

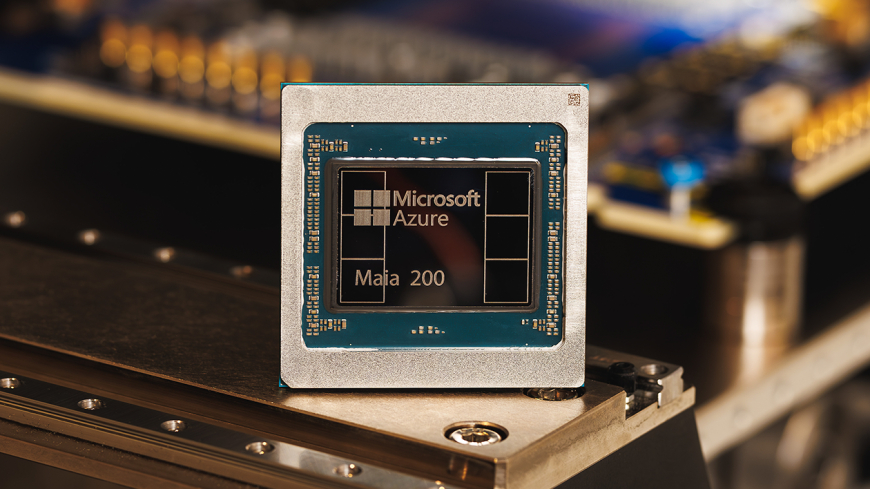

Microsoft has launched the Maia 200 AI inference chip, designed to run large models more efficiently while reducing power use and operational costs.

Microsoft has unveiled its newest custom chip, the Maia 200, describing it as a high-performance processor explicitly built to scale AI inference workloads.

The Maia 200 follows the release of the Maia 100 in 2023 and has been engineered to support larger and more advanced AI models with improved speed and efficiency. According to Microsoft, the chip contains more than 100 billion transistors and delivers over 10 petaflops of performance at 4-bit precision, along with roughly 5 petaflops at 8-bit precision. These gains represent a significant leap compared with the earlier generation.

Inference refers to the phase in which trained AI models are run to generate outputs, rather than the compute-intensive process of teaching them. As AI companies scale their operations, inference has emerged as a major driver of computing costs, prompting increased focus on optimising how models are deployed and executed.

Microsoft says the Maia 200 is intended to address those challenges by reducing power consumption and operational friction for AI workloads. “In practical terms, one Maia 200 node can effortlessly run today’s largest models, with plenty of headroom for even bigger models in the future,” the company said.

The launch also reflects a broader industry shift toward internally developed chips, as major technology companies look to reduce reliance on Nvidia, whose advanced GPUs have become central to AI development. Google, for example, uses its Tensor Processing Units, or TPUs, which are offered through its cloud platform rather than sold as standalone chips. Amazon has taken a similar approach with its Trainium accelerator line, unveiling the latest version, Trainium3, in December. These alternatives allow companies to shift some AI workloads away from Nvidia hardware, helping control costs.

With Maia, Microsoft aims to position itself alongside those competitors. In a press release issued Monday, the company said the Maia 200 delivers three times the FP4 performance of Amazon’s third-generation Trainium chips and exceeds the FP8 performance of Google’s seventh-generation TPU.

Microsoft noted that the Maia chip is already being used internally to support models developed by its Superintelligence team and to power Copilot, the company’s AI assistant. The company also said it has begun inviting developers, researchers, academic institutions, and leading AI labs to experiment with the Maia 200 software development kit as part of their own workloads.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0