Nvidia is quietly building a multibillion-dollar business beyond its chip empire

NVIDIA is expanding beyond GPUs into software, AI infrastructure, and services, quietly building a multibillion-dollar business to rival its core chip division.

NVIDIA CEO Jensen Huang demonstrated forward-thinking when he began investing in AI-focused chip development back in 2010, well before artificial intelligence became a dominant industry trend. A similar strategic decision made in 2020 — strengthening the company’s position in data centre networking through a key acquisition — has since evolved into one of Nvidia’s fastest-growing and most profitable divisions. However, it has received far less public attention.

Within a relatively short period, Nvidia’s networking segment, which focuses on connecting data centres, has become the company’s second-largest revenue source after its compute business. In the most recent quarter, the division generated $11 billion in revenue, representing a 267% increase compared to the previous year. For the full year, it contributed more than $31 billion, according to Nvidia’s latest financial results.

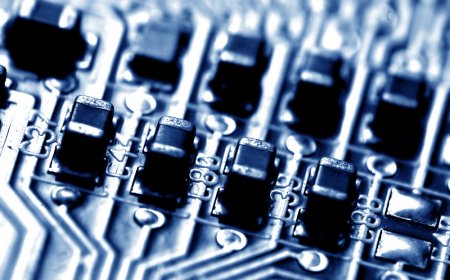

This rapid expansion is closely tied to the rise of AI workloads. The networking division includes technologies such as NVLink, which enables communication between GPUs within a data centre; Nvidia’s InfiniBand switches, designed for in-network computing; Spectrum-X, an Ethernet-based AI networking platform; and co-packaged optics switches, among other innovations.

Together, these components form the infrastructure required to build what Nvidia describes as an “AI factory,” a data centre specifically designed for training and running AI models.

Kevin Cook, a senior equity strategist at Zacks Investment Research, described Nvidia’s networking business as one of the company’s most impressive developments. He noted that the division’s quarterly revenue of $11 billion rivals or exceeds the annual output of established networking companies like Cisco.

Despite its scale and growth, the networking segment has not attracted as much attention as Nvidia’s chip business, which remains significantly larger. It also receives less recognition than the company’s gaming division, which historically formed the core of Nvidia’s operations but is now much smaller.

The foundation of Nvidia’s networking business can be traced back to its 2020 $7 billion acquisition of Mellanox, an Israeli networking company founded in 1999.

Kevin Deierling, senior vice president of networking at Nvidia, joined the company as part of that acquisition. He acknowledged that the division’s lower visibility might partly be due to limited promotion, though he believes the role of networking is often misunderstood.

“People think of networking as just, ‘I got a printer, and I need to connect to it,’” Deierling said. “Jensen said this the first day when he acquired us, he said the data center is the new unit of computing. Networking is a lot more than just moving the smaller amounts of data between a compute node; it’s actually a foundation.”

Deierling also admitted that he initially did not fully understand Huang’s reasoning behind acquiring Mellanox at the time, but now sees its strategic importance. Integrating networking capabilities with Nvidia’s GPU technology enables the company to deliver more comprehensive and optimised solutions.

“When Jensen bought Mellanox in 2020, he saw that it was the missing piece to make GPUs a complete package,” Cook explained.

Another factor contributing to the success of Nvidia’s networking business is its approach to delivering technology as a fully integrated stack rather than as individual components. The company typically works through partners to bring these solutions to market, rather than selling the hardware directly.

“I can’t think of other companies that have the full-stack capabilities that we have,” Deierling said. “We are really different. We build the full compute stack, a fully integrated stack, and then we go to market through all of our partners.”

At its annual GTC technology conference on March 16, Nvidia announced several updates to its networking ecosystem during Huang’s keynote presentation. These included the introduction of the Nvidia Rubin platform, featuring six new chips designed to power an AI supercomputer. The company also unveiled the Nvidia Inference Context Memory Storage platform and more efficient Spectrum-X Ethernet Photonics switches, among other advancements.

Deierling emphasised that networking is no longer a secondary component in computing systems but has become central to modern AI infrastructure.

“It’s no longer a peripheral to connect the printer, some other slow I/O device,” he said. “It’s fundamental to the computer. In the old days, we had what was called the back lining inside the computer. Today, the network is the backbone of the AI factory, and it’s super important.”

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0