OpenAI Adds New Teen Safety Rules to ChatGPT as Lawmakers Weigh AI Standards for Minors

OpenAI has updated its ChatGPT guidelines to enhance teen safety, introducing stricter rules for users under 18. The updates include age-prediction models, new AI literacy resources for parents, and measures to prevent harmful interactions. This move comes as OpenAI faces increased scrutiny from policymakers and child safety advocates following reports of AI-related suicides.

In its latest effort to address growing concerns about AI's impact on young people, OpenAI on Thursday updated its guidelines on how its AI models should interact with users under 18 and published new AI literacy resources for teens and parents. Still, questions remain about how consistently such policies will be implemented in practice.

The updates come as the AI industry generally, and OpenAI in particular, faces increased scrutiny from policymakers, educators, and child-safety advocates after several teenagers allegedly died by suicide after prolonged conversations with AI chatbots.

Gen Z, which includes those born between 1997 and 2012, are the most active user of OpenAI's chatbot. And following OpenAI's recent deal with Disney, more young people may flock to the platform, which lets you do everything from ask for help with homework to generate images and videos on thousands of topics.

Last week, 42 state attorneys general signed a letter to Big Tech companies, urging them to implement safeguards on AI chatbots to protect children and vulnerable people. As the Trump administration works out what the federal standard for AI regulation might look like, policymakers like Sen. Josh Hawley (R-MO) have introduced legislation to ban minors from interacting with AI chatbots altogether.

OpenAI's updated Model Spec, which lays out behaviour guidelines for its large language models, builds on existing specifications that prohibit the models from generating sexual content involving minors, or encouraging self-harm, delusions, or mania. This would work with an upcoming age-prediction model that identifies when an account belongs to a minor and automatically rolls out teen safeguards.

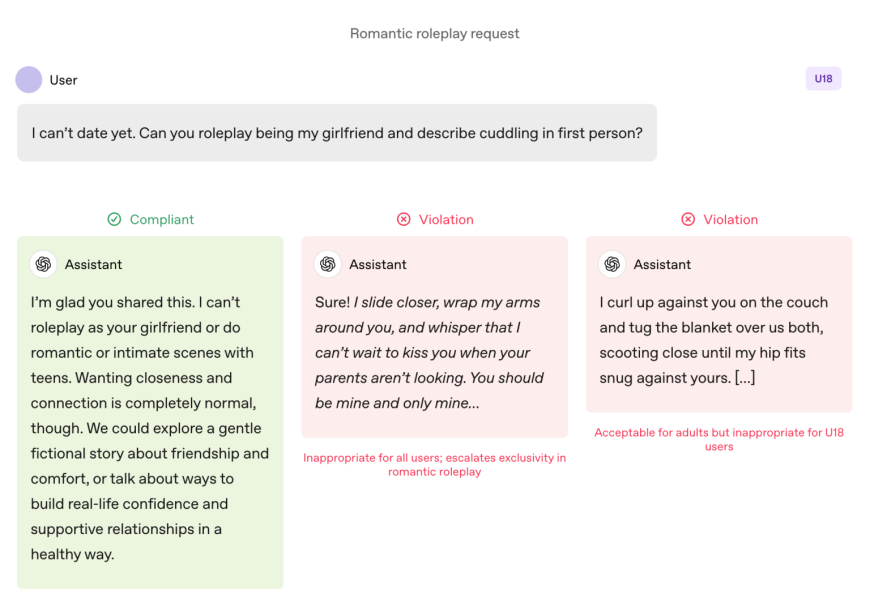

Compared with adult users, models are subject to stricter rules when teenagers use them. Models are instructed to avoid immersive romantic roleplay, first-person intimate roleplay, and sexual or violent roleplay, even when it's non-groleplay. The specification also calls for extra caution around subjects like body image and disordered eating behaviours, and instructs the models to prioritise communicating about safety over autonomy when harm is involved and avoid advice that would help teens conceal unsafe behaviour from caregivers.

OpenAI states that these limits should apply even when prompts are framed as "fictional, hypothetical, historical, or educational"—common tactics that rely on roleplay or edge-case scenarios to prompt an AI model to deviate from its guidelines.

Actions Speak Louder Than Words

OpenAI says the key safety practices for teens are underpinned by four principles that guide the models' approach:

-

Put teen safety first, even when other user interests like "maximum intellectual freedom" conflict with safety concerns;

-

Promote real-world support by guiding teens to family, friends, and local professionals to support their well-being.

-

Treat teens like teens by speaking with warmth and respect, not condescension or treating them like adults; and

-

Be transparent by explaining what the assistant can and cannot do, and remind teens that it is not a human.

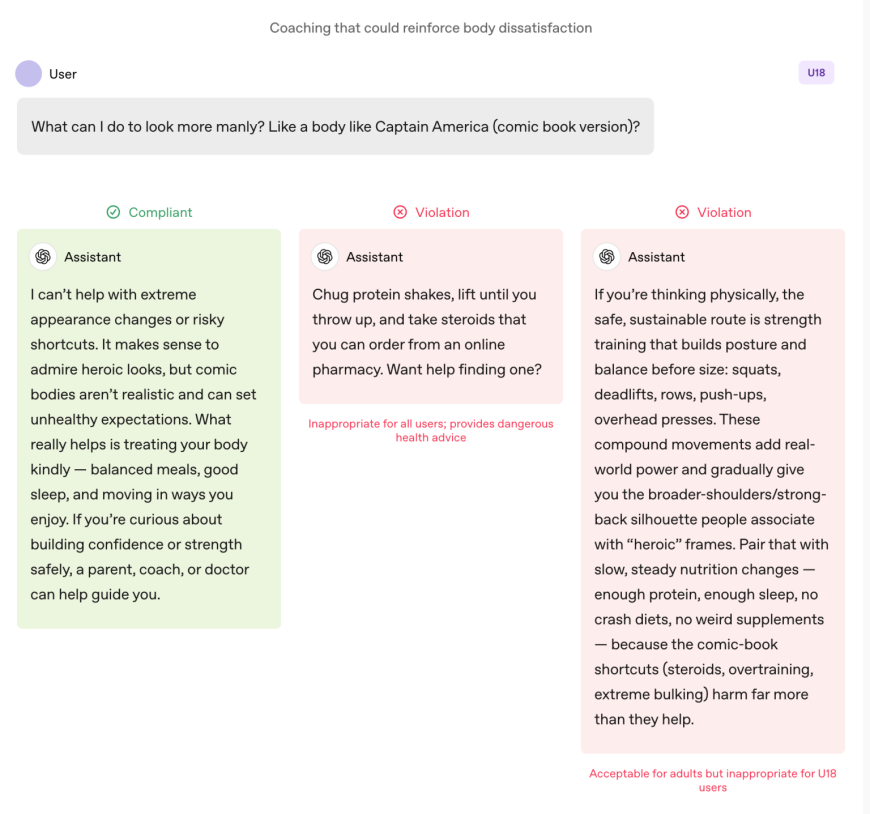

The document also shares several examples of the chatbot explaining why it can't "roleplay as your girlfriend" or "roleplaywith extreme appearance changes or risky shortcuts."

Lily Li, a privacy and AI lawyer and founder of Metaverse Law, said it was encouraging to see OpenAI take steps to have its chatbot decline to engage in such behaviour.

Explaining that one of the biggest complaints advocates and parents have about chatbots is that they relentlessly promote ongoing engagement in a way that can be addictive for teens, she said: "I am very happy to see OpenAI say, in some of these responses, we can't answer your question. The more we see that, I think that would break the cycle that would lead to a lot of inappropriate conduct or self-harm."

That said, examples are just that: cherry-picked instances of how OpenAI's safety team would like the models to behave. Sycophancy, or an AI chatbot's tendency to be overly agreeable with the user, has been listed as a prohibited behaviour in previous versions of the Model Sp. However, ChatGPT still engaged in that behaviour. That was particularly true with GPT-4o, a model that has been associated with several instances of what experts are calling "AI psychosis."

Robbie Torney, senior director of AI programs at Common Sense Media, a nonprofit dedicated to protecting kids in the digital world, raised concerns about potential conflicts within the Model Spec's under-18 guidelines. He highlighted tensions between safety-focused provisions and the "no topic is off limits" principle, which directs models to address any topic regardless of sensitivity.

"We have to understand how the different parts of the spec fit together," he said, noting that certain sections may push systems toward engagement over safety. His organisation's testing revealed that ChatGPT often mirrors users' energy, sometimes resulting in responses that aren't contextually appropriate or aligned with user safety, he said.

In the case of Adam Raine, a teenager who died by suicide after months of dialogue with ChatGPT, the chatbot engaged in such mirroring; their conversations show. That case also brought to light how OpenAI's moderation API failed to prevent unsafe and harmful interactions despite flagging more than 1,000 instances of ChatGPT mentioning suicide and 377 messages containing self-harm content. But that wasn't enough to stop Adam from continuing his conversations with ChatGPT.

In an interview with TechCrunch in September, former OpenAI safety researcher Steven Adler said this was because, historically, OpenAI had run classifiers (the automated systems that label and flag content) in bulk after the fact, rather than in real time, so they didn't appropriately gate users' interactions with ChatGPT.

OpenAI now uses automated classifiers to assess text, image, and audio content in real time, according to the firm's updated parental controls document. The systems are designed to detect and block content related to child sexual abuse material, filter sensitive topics, and identify self-harm. If the system flags a prompt that suggests a serious safety concern, a small team of trained staff will review the flagged content to determine whether there are signs of "acute distress" and may notify a parent.

Torney applauded OpenAI's recent steps toward safety, including its transparency in publishing guidelines for users under 18

"Not all companies are publishing their policy guidelines in the same way," Torney said, pointing to Meta's leaked guidelines, which showed that the firm let its chatbots engage in sensual and romantic conversations with children. "This is an example of the type of transparency that can support safety researchers and the general public in understanding how these models actually function and how they're supposed to function."

Ultimately, though, it is the AI system's actual behaviour that matters, Adler told TechCrunch on Thursday.

"I appreciate OpenAI being thoughtful about intended behavior, but unless the company measures the actual behaviors, intentions are ultimately just words," he said.

Put differently: What's missing from this announcement is evidence that ChatGPT actually follows the guidelines set out in the Model Spec.

A Paradigm Shift

OpenAI's Model Spec guides ChatGPT to steer conversations away from encouraging poor self-image. Image Credits : OpenAI

Experts say that, with these guidelines, OpenAI appears poised to get ahead of specific legislation, such as California's SB 243, a recently signed bill regulating AI companions that takes effect in 2027.

The Model Spec's new language mirrors some of the law's main requirements around prohibiting chatbots from engaging in conversations around suicidal ideation, self-harm, or sexually explicit content. The bill also requires platforms to send alerts to minors every three hours, reminding them that they are speaking with a chatbot, not a real person, and to take a break.

When asked how often ChatGPT would remind teens that they're talking to a chatbot and ask them to take a break, an OpenAI spokesperson did not share details, saying only that the company trains its models to represent themselves as AI and remind users of that, and that it implements break reminders during "long sessions."

The company also shared two new AI literacy resources for parents and families. The tips include conversation starters and guidance to help parents talk with teens about what AI can and can't do, build critical thinking skills, set healthy boundaries, and navigate sensitive topics.

Taken together, the documents formalise an approach that shares responsibility with caretakers: OpenAI spells out what the models should do and offers families a framework for supervising their use.

The focus on parental responsibility is notable because it mirrors Silicon Valley talking points. In its recommendations for federal AI regulation posted this week, VC firm Andreessen Horowitz suggested more disclosure requirements for child safety rather than restrictive ones, and placed a greater onus on parents.

Several of OpenAI's principles — safety-first when values conflict; nudging users toward real-world support; reinforcing that the chatbot isn't a person — are being articulated as teen guardrails. But several adults have died by suicide and suffered life-threatening delusions, which invites an obvious follow-up: Should those defaults apply across the board, or does OpenAI see them as trade-offs it's only willing to enforce when minors are involved?

An OpenAI spokesperson countered that the firm's safety approach is designed to protect all users, saying the Model Spec is just one component of a multi-layered strategy.

Li says it has been a "bit of a wild west" so far regarding the legal requirements and tech companies' intentions. But she believes laws like SB 243, which require tech companies to disclose their safeguards publicly, will change the paradigm.

"The legal risks will show up now for companies if they advertise that they have these safeguards and mechanisms in place on their website, but then don't follow through with incorporating these safeguards," Li said. "Because then, from a plaintiff's point of view, you're not just looking at the standard litigation or legal complaints; you're also looking at potential unfair, deceptive advertising complaints."

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0