Pentagon says Anthropic’s AI limits pose national security concerns

The U.S. Department of Defence warns that Anthropic’s restrictions on AI use could create national security risks, highlighting tensions between safety policies and military needs.

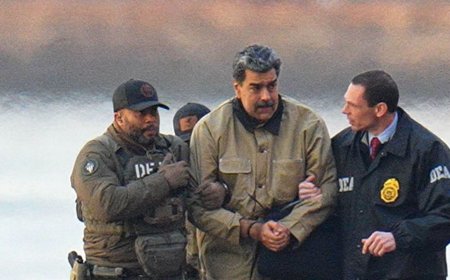

The U.S. Department of Defence stated on Tuesday evening that Anthropic represents an “unacceptable risk to national security,” marking its first formal response to the company’s legal challenges against Defence Secretary Pete Hegseth’s decision to classify Anthropic as a supply-chain risk last month. As part of its legal action, Anthropic had asked the court to temporarily prevent the Department of Defence from enforcing that designation.

In a 40-page filing submitted to a federal court in California, the Department of Defence argued that Anthropic could potentially “attempt to disable its technology or preemptively alter the behaviour of its model” before or during military operations if the company believed its internal “red lines” were being violated.

Anthropic entered into a $200 million agreement with the Pentagon last summer to deploy its AI systems in classified environments. However, during subsequent negotiations over contract terms, the company expressed concerns about certain applications of its technology. Specifically, Anthropic stated it did not want its AI used for mass surveillance of U.S. citizens and indicated that the systems were not yet suitable for decisions involving the targeting or firing of lethal weapons. The Pentagon rejected these conditions, maintaining that private companies should not determine how military technology is used.

In response to the Department of Defence’s position, an Anthropic spokesperson referenced a statement made by CEO Dario Amodei in late February: “Anthropic understands that the Department of War, not private companies, makes military decisions. We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner.”

Chris Mattei, an attorney specialising in First Amendment law and a former Justice Department official, said there has been no investigation to substantiate the Department of Defence’s concerns that Anthropic might disable or modify its AI systems during military operations. Without such evidence, Mattei argued, the government’s position does not adequately justify labelling the company as an adversary.

“The government is relying completely on conjectural, speculative imaginings to justify a very, very serious legal step they’ve taken against Anthropic,” Mattei said. He added that the department failed to “articulate a credible or even comprehensible rationale for why Anthropic’s refusal to agree to an ‘all lawful use’ provision rendered it a supply chain risk as opposed to a vendor that DOD simply didn’t want to do business with.”Several organisations have criticised the Department of Defence’s handling of the situation, suggesting that the agency could have terminated its contract rather than taking more aggressive action. Several technology companies and employees — including individuals from OpenAI, Google, and Microsoft — along with legal advocacy groups, have submitted amicus briefs in support of Anthropic.

In its lawsuits, Anthropic has argued that the Department of Defence’s actions violate its First Amendment rights and amount to punishment for its stated principles.

“In many ways, the government’s nonsensical arguments are themselves the best evidence that the administration’s conduct was plainly a retaliatory punishment for Anthropic’s refusal to agree to the government’s terms, which, contrary to the government’s brief, is a form of protected expression,” Mattei said.

A court hearing regarding Anthropic’s request for a preliminary injunction is scheduled for next Tuesday.

An Anthropic spokesperson stated that pursuing judicial review does not alter the company’s “longstanding commitment to harnessing AI to protect our national security,” but described the legal action as a “necessary step” to safeguard its business operations, customers, and partners.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0