How Google Uses Historic News Data and AI to Predict Flash Floods

Google is combining historical news reports with artificial intelligence to improve flash flood prediction and deliver earlier warnings to vulnerable communities.

Flash floods rank among the deadliest weather disasters worldwide, causing the deaths of more than 5,000 people every year. There are also some of the hardest events to forecast. Google now says it has found an unusual way to improve that prediction challenge — by analysing news coverage.

Although people have collected large amounts of weather-related information over time, flash floods are often too brief and too localised to be measured comprehensively, unlike temperature patterns or even river flows, which are monitored more consistently. Because of that lack of data, deep learning models — which have become increasingly powerful in weather forecasting — have struggled to predict flash floods accurately.

To address that issue, Google researchers turned to Gemini, the company’s large language model, and used it to analyse 5 million news articles from around the world. From those reports, the researchers identified 2.6 million separate flood events and converted them into a geo-tagged time series dataset called “Groundsource.” According to Gila Loike, a product manager at Google Research, this is the first time the company has applied language models in this specific way. The research and dataset were made public on Thursday morning.

Using Groundsource as a real-world reference point, the researchers then trained a model based on a Long Short-Term Memory, or LSTM, neural network. That model takes in global weather forecasts and produces the probability of flash floods occurring in a particular area.

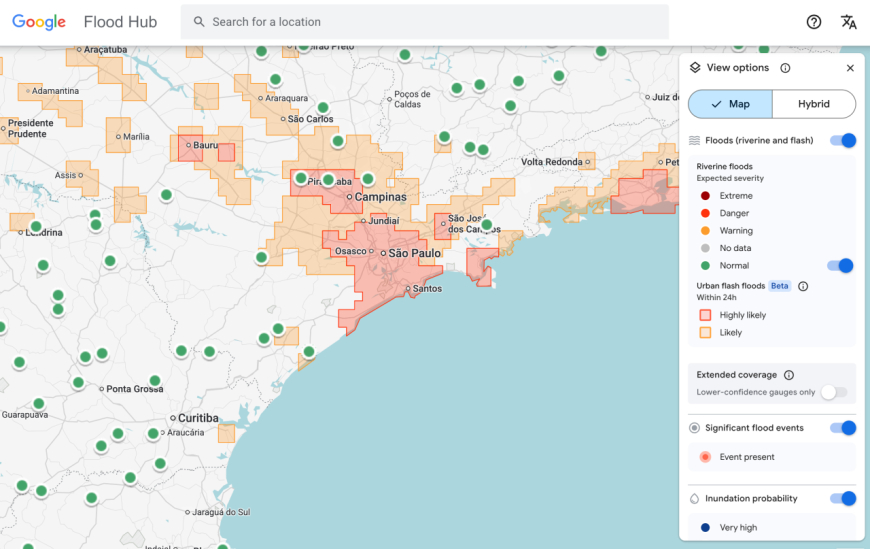

Google’s flash flood forecasting model now surfaces risk information for urban areas in 150 countries through the company’s Flood Hub platform, while also sharing data with emergency response agencies worldwide. António José Beleza, an emergency response official with the Southern African Development Community who tested the forecasting system with Google, said it helped his organisation react to flooding events more quickly.

The model still has important limitations. For example, it operates at relatively low resolution, flagging risk across areas of about 20 square kilometres. It is also less precise than the flood alert system used by the U.S. National Weather Service, partly because Google’s model does not use local radar data, which is used to track real-time precipitation.

Part of the project’s purpose, however, is to function in regions where local governments cannot afford expensive weather-monitoring infrastructure or where detailed meteorological records do not exist.

“Because we’re aggregating millions of reports, the Groundsource dataset actually helps rebalance the map,” Juliet Rothenberg, a program manager on Google’s Resilience team, told reporters this week. “It enables us to extrapolate to other regions where there isn’t as much information.”

Rothenberg said the team believes that using large language models to convert written qualitative material into quantitative datasets could also be useful for building datasets around other short-lived but important-to-predict events, such as heat waves and mudslides.

Marshall Moutenot, CEO of Upstream Tech — a company that uses similar deep learning models to forecast river flows for customers such as hydropower firms — said Google’s work is part of a broader push to assemble better datasets for deep learning-based weather forecasting. Moutenot is also a co-founder of dynamical.org, a group that curates machine learning-ready weather data for researchers and startups.

“Data scarcity is one of the most difficult challenges in geophysics,” Moutenot said. “Simultaneously, there’s too much Earth data, and then when you want to evaluate against truth, there’s not enough. This was a really creative approach to get that data.”

Tags:

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0