Inside the Facebook insider shaping content moderation for the AI age

A Facebook insider is helping redefine content moderation for the AI era, addressing challenges in automation, safety, and platform accountability.

When Brett Levenson left Apple in 2019 to take on a leadership role in business integrity at Facebook, the company was still dealing with the fallout from the Cambridge Analytica scandal. At the time, Levenson believed the platform’s content moderation challenges could be solved through improved technology.

However, he soon realised that the issue extended far beyond technical limitations. Human moderators were expected to absorb lengthy policy documents — sometimes translated poorly into different languages — and make complex decisions about flagged content within roughly 30 seconds. These decisions included whether to remove content, suspend accounts, or limit visibility. According to Levenson, the accuracy of these judgments hovered just above 50%, making the system unreliable and often too slow to prevent harm.

He described the process as reactive and inefficient, with enforcement typically happening well after harmful content had already circulated. As digital threats evolved and adversarial actors became more sophisticated, this approach proved increasingly inadequate. The emergence of AI-powered chatbots has added another layer of complexity, with incidents involving unsafe responses, including harmful guidance and the creation of inappropriate content, drawing widespread scrutiny.

This experience led Levenson to develop the concept of “policy as code,” an approach that transforms static moderation guidelines into dynamic, executable systems that can enforce rules in real time. That idea became the foundation for Moonbounce, a company he later founded.

Moonbounce recently raised $12 million in funding, with backing from Amplify Partners and StepStone Group. The company focuses on providing an additional layer of safety for platforms where content is generated, whether by users or by artificial intelligence systems.

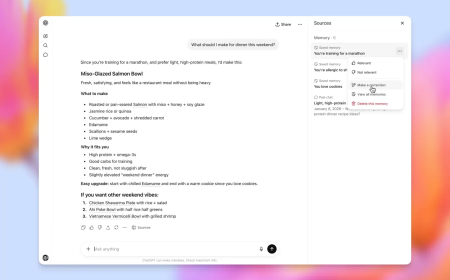

The platform uses its own large language model to interpret a company’s policy framework, evaluate content in real time, and respond in under 300 milliseconds. Depending on the configuration, it can either delay content distribution pending human review or block high-risk material immediately.

Moonbounce currently serves three primary categories of clients: platforms that rely on user-generated content, such as dating applications; companies developing AI-driven characters or companions; and businesses offering AI-based image generation tools.

According to Levenson, the system processes more than 40 million content evaluations daily and supports platforms with over 100 million daily active users. Its customer base includes companies such as Channel AI, Civitai, Dippy AI, and Moescape.

Levenson believes that safety can evolve from being a compliance requirement into a product advantage. Traditionally treated as an afterthought, safety mechanisms can now be integrated directly into user experiences, offering both protection and differentiation.

The broader industry is beginning to adopt similar approaches. For example, platforms like Tinder have used AI-powered moderation tools to improve detection accuracy significantly, reportedly achieving substantial gains in identifying problematic content.

Investors also see growing urgency in this space. Lenny Pruss of Amplify Partners highlighted that as large language models become central to modern applications, the need for real-time, objective safeguards becomes critical infrastructure.

AI companies are increasingly facing legal and reputational risks tied to content moderation failures. High-profile incidents involving harmful chatbot interactions and misuse of generative tools have intensified scrutiny, pushing companies to seek external solutions rather than relying solely on internal systems.

Moonbounce positions itself as an independent layer between users and AI systems, focusing exclusively on enforcing rules at runtime, rather than being overwhelmed by the broader conversational context that chatbots must process.

Levenson runs the company alongside co-founder Ash Bhardwaj, who previously worked with him at Apple on large-scale cloud and AI infrastructure. The team is now working on a feature called “iterative steering,” designed to intervene in sensitive conversations and guide AI responses toward safer and more constructive outcomes.

This approach aims to go beyond simply blocking harmful interactions. Instead, it seeks to redirect conversations in real time, ensuring that AI systems respond in ways that are both empathetic and helpful, particularly in high-risk situations.

Despite speculation about potential acquisition by larger technology firms such as Meta, Levenson expressed concerns about limiting access to the technology. He emphasised the importance of keeping such systems broadly available rather than restricting them within a single organisation.

The evolution of Moonbounce reflects a broader shift in how the tech industry approaches content moderation, moving from reactive enforcement to proactive, integrated safety systems designed for the realities of the AI-driven internet.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0