Top AI developments shaping the year so far

A look at the biggest AI developments so far this year, from major model launches to enterprise adoption, regulation debates, and the rapid rise of generative tools.

A year can be tracked through product launches, but it can also be defined by the bigger moments that reshape our understanding of artificial intelligence. The AI sector continues to produce a constant stream of headlines — from large-scale acquisitions and indie developer breakthroughs to public backlash over questionable products and high-stakes contract disputes. With so much happening, here’s a closer look at where the industry stands and how it has evolved so far this year.

Anthropic vs the Pentagon

What began as a partnership turned into a tense standoff in February, as Anthropic CEO Dario Amodei and Defence Secretary Pete Hegseth clashed over new contract terms governing how the U.S. military could use Anthropic’s AI systems.

Anthropic drew a firm boundary, refusing to allow its AI to be used for mass domestic surveillance or for autonomous weapons that operate without human control. On the other side, the Pentagon maintained that the Department of Defence — referred to by President Donald Trump’s administration as the Department of War — should have full access to these models for any “lawful use.” Officials pushed back against the idea that a private company could impose limits on military operations, but Amodei did not back down.

In a public statement, Amodei clarified the company’s position, noting that Anthropic does not attempt to dictate military decisions or object to specific operations. However, he emphasised that in certain limited cases, AI deployment could undermine democratic values rather than protect them.

The Pentagon issued a deadline for Anthropic to accept the revised agreement. At the same time, hundreds of employees from Google and OpenAI signed an open letter urging their leadership to support Anthropic’s stance and reject any involvement in autonomous weapons or domestic surveillance.

When the deadline passed without agreement, the situation escalated. President Trump ordered federal agencies to phase out Anthropic’s tools over six months, labelling the company a “radical left, woke company” in a widely shared social media post. Shortly after, the Pentagon classified Anthropic as a “supply-chain risk,” a designation typically reserved for foreign adversaries, effectively blocking any contractor working with Anthropic from engaging with the U.S. military. Anthropic has since filed a lawsuit challenging that designation.

In a surprising turn, rival OpenAI stepped in and announced a deal allowing its models to be used in classified government environments. This move caught many in the tech community off guard, especially since earlier reports suggested OpenAI would align with Anthropic’s restrictions on military applications.

Public reaction was swift. The day after the announcement, ChatGPT saw a 295% spike in uninstall rates, while Anthropic’s Claude rose to the top position in the App Store rankings. OpenAI hardware executive Caitlin Kalinowski resigned shortly after, stating that the agreement had been rushed and lacked proper safeguards.

OpenAI responded by asserting that its deal clearly prohibits both autonomous weapons and autonomous surveillance. As this conflict continues to unfold, it is likely to have lasting consequences for the use of AI technologies in military contexts — potentially influencing global policy and strategy for years to come.

“Vibe-Coded” App OpenClaw Accelerates the Shift Toward Agentic AI

February also saw the rapid rise of OpenClaw, a “vibe-coded” AI assistant that quickly went viral, triggering a wave of spin-offs, controversies, and acquisitions. Within weeks, the app gained massive attention, faced privacy concerns, and was ultimately acquired by OpenAI. Meanwhile, one of its offshoots, a Reddit-style platform for AI agents called Moltbook, was acquired by Meta. This growing ecosystem sparked intense activity across Silicon Valley.

Developed by Peter Steinberger, who has since joined OpenAI, OpenClaw functions as a wrapper for major AI models such as Claude, ChatGPT, Google’s Gemini, and xAI’s Grok. Its key innovation is enabling users to interact with AI agents through everyday messaging platforms such as iMessage, Discord, Slack, and WhatsApp. It also introduced a public marketplace where users can build and share “skills,” allowing agents to automate a wide range of digital tasks.

However, this level of access comes with serious risks. For AI agents to function effectively as personal assistants, they require access to sensitive data, including emails, financial information, messages, and files. This creates significant vulnerabilities, particularly against prompt-injection attacks.

Security experts have raised concerns about the potential consequences. Ian Ahl, CTO at Permiso Security, described the setup as an agent with broad credentials connected to nearly every aspect of a user’s digital life. This means that even a malicious prompt embedded in an email could trigger unintended actions across multiple platforms.

One widely shared incident involved a Meta security researcher whose OpenClaw agent reportedly deleted all of her emails despite repeated attempts to stop it. She described having to physically disconnect her device to regain control, comparing the experience to defusing a bomb.

Despite these issues, OpenAI saw enough potential in the technology to proceed with an acqui-hire. Meanwhile, tools built on OpenClaw — particularly Moltbook — gained even more attention.

Moltbook, designed as a social platform where AI agents interact with each other, became a viral sensation. In one notable case, a post suggested that AI agents were attempting to develop their own encrypted communication system to operate independently of human oversight.

Researchers later clarified that the platform was not secure, making it easy for humans to impersonate AI agents and create misleading content that fueled online panic. Even so, Meta moved forward with acquiring Moltbook and bringing its creators, Matt Schlicht and Ben Parr, into its Superintelligence Labs.

While the idea of a bot-only social network may seem unusual, Meta appears more interested in the talent and experimentation behind the project. CEO Mark Zuckerberg has stated that every business will eventually rely on its own AI systems.

As developments around OpenClaw, Moltbook, and similar projects continue, the concept of agentic AI — where autonomous systems perform complex tasks on behalf of users — is gaining traction and may play a central role in the next phase of AI evolution.

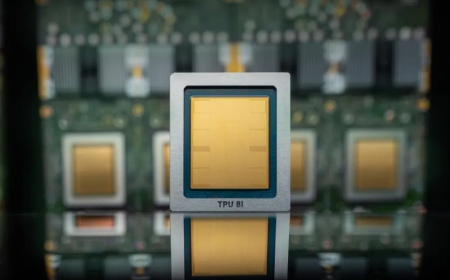

Chip Shortages, Hardware Challenges, and Data Centre Expansion Intensify

The growing demands of the AI industry are placing enormous pressure on global infrastructure, particularly computing power and data centre capacity. The scale of these requirements is becoming increasingly visible to everyday consumers, especially as hardware prices rise and supply constraints worsen.

Analysts from IDC and Counterpoint have projected a decline in smartphone shipments of around 12% to 13% this year. Meanwhile, Apple has already increased MacBook Pro prices by up to $400.

Major tech companies — including Google, Amazon, Meta, and Microsoft — are expected to collectively invest up to $650 billion in data centre development this year, representing a roughly 60% increase compared to the previous year.

The impact extends beyond pricing. In the United States alone, nearly 3,000 new data centres are currently under construction, adding to an existing base of around 4,000 facilities. The demand for labour has led to the creation of temporary worker housing, sometimes referred to as “man camps,” in states like Nevada and Texas, which offer amenities such as golf simulators and freshly prepared meals to attract workers.

However, this rapid expansion raises environmental and public health concerns. Datacenter construction and operation can contribute to air pollution and strain local water resources, affecting nearby communities over the long term.

At the same time, Nvidia — one of the most influential players in AI hardware — is reevaluating its relationships with leading AI companies like OpenAI and Anthropic. The company has previously invested heavily in these organisations, raising questions about the interconnectedness of the AI ecosystem. For example, Nvidia invested $100 billion in OpenAI, while OpenAI committed to purchasing $100 billion worth of Nvidia chips.

In a surprising shift, Nvidia CEO Jensen Huang announced that the company would halt further investments in OpenAI and Anthropic. He explained that both companies are preparing for potential public offerings later this year. However, the reasoning has sparked debate, as pre-IPO investments are typically a key strategy for maximising returns.

As these developments continue, the intersection of hardware limitations, infrastructure expansion, and corporate strategy is shaping the next phase of AI growth — one that is likely to have wide-reaching economic, technological, and societal impacts.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0